Introduction

A silent crisis currently slows the digital retail industry, specifically within the asset creation pipeline. For years, the standard workflow for creating 3D product models involved a skilled artist manually sculpting a sneaker or handbag vertex by vertex. This artist attempted to imitate reality and tried to artificially recreate the chaotic texture of leather or the precise weave of canvas through subjective interpretation. This manual process creates a bottleneck, especially when 30% of online products are returned compared to just 8.89% in brick-and-mortar stores.

The industry is now moving away from imitating reality to digitizing it directly through "Reality Capture." This method replaces the artist's interpretation with objective optical data. In this article, we provide a technical tour of how we turn standard 2D inputs into volumetric 3D assets using our 3d-from-photo generator. We explain why this engineering approach renders traditional manual CAD obsolete for e-commerce by delivering superior fidelity and scalability. This obsolescence begins with the fundamental difference between building a model and capturing one.

Fundamental Shift: Construction vs. Reconstruction

Traditional CAD functions as a digital construction site. When an artist builds a model using Computer-Aided Design (CAD), they define the geometry mathematically from scratch. They start with a blank digital canvas and build the object polygon by polygon. This process resembles painting a portrait, where the artist observes a subject and applies paint to canvas to replicate it. The result, while mathematically perfect, often looks sterile because it lacks the chaotic irregularities of the real world.

Photogrammetry represents a shift to reconstruction. Instead of building an object from nothing, the software analyzes visual data to derive the geometry that already exists. This process resembles photography rather than painting, but with the added dimension of depth. The technology measures the object and reconstructs it based on optical facts rather than human decisions.

This difference in approach impacts efficiency. Retailers who rely on automatic 3D asset creation avoid the massive time investments required by manual modeling. For example, complex handbag models require 70+ hours to create, yet photogrammetry processes the same asset within 24 hours. The disparity grows with complexity. A case study on manual 3D rifle model creation showed that it required 110 hours of artist work, which included separate stages for modeling and texturing. Photogrammetry collapses these stages into a single, automated workflow. But how does this automation actually work?

Black Box Demystified: Photogrammetry & Computer Vision

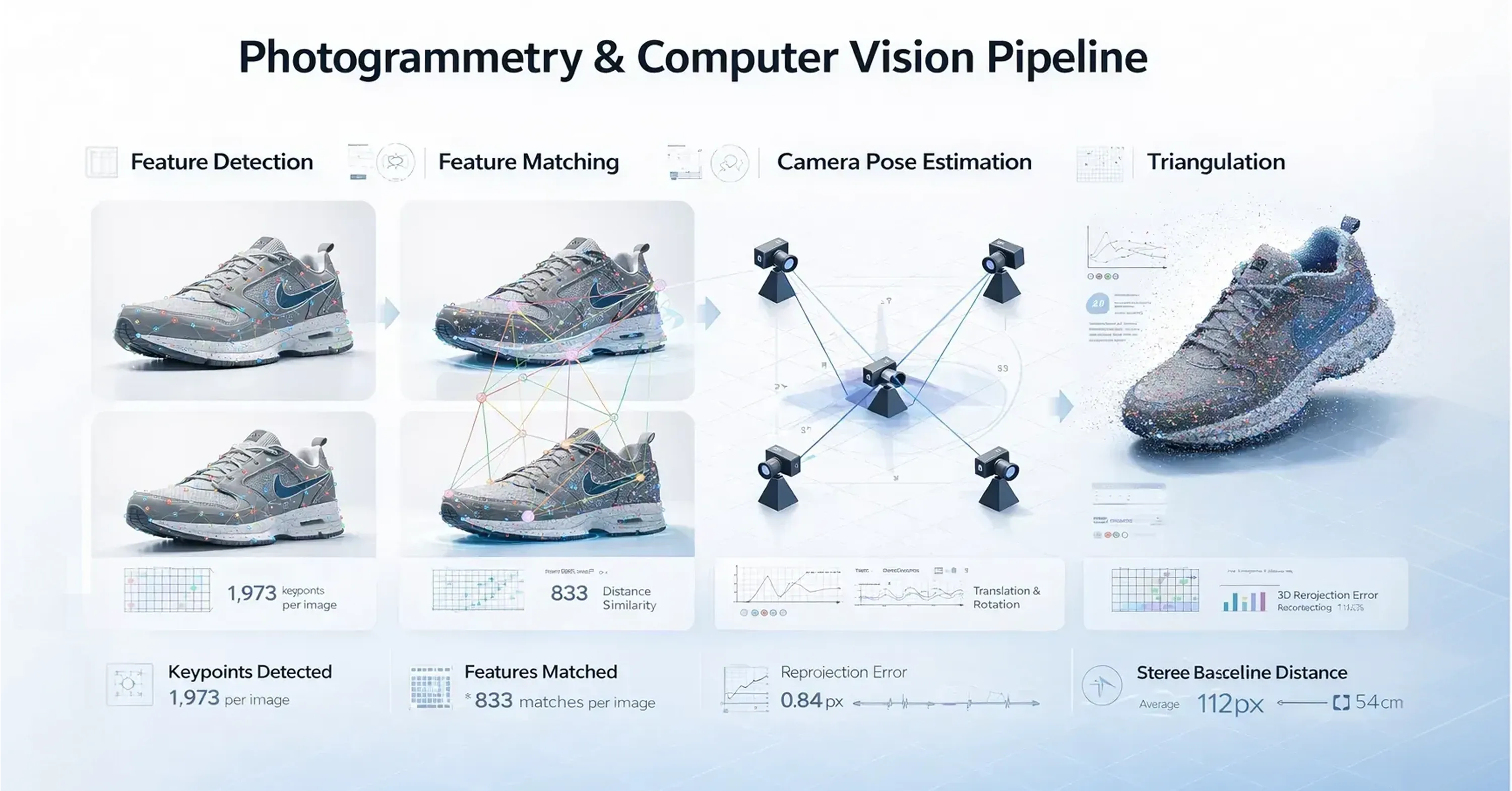

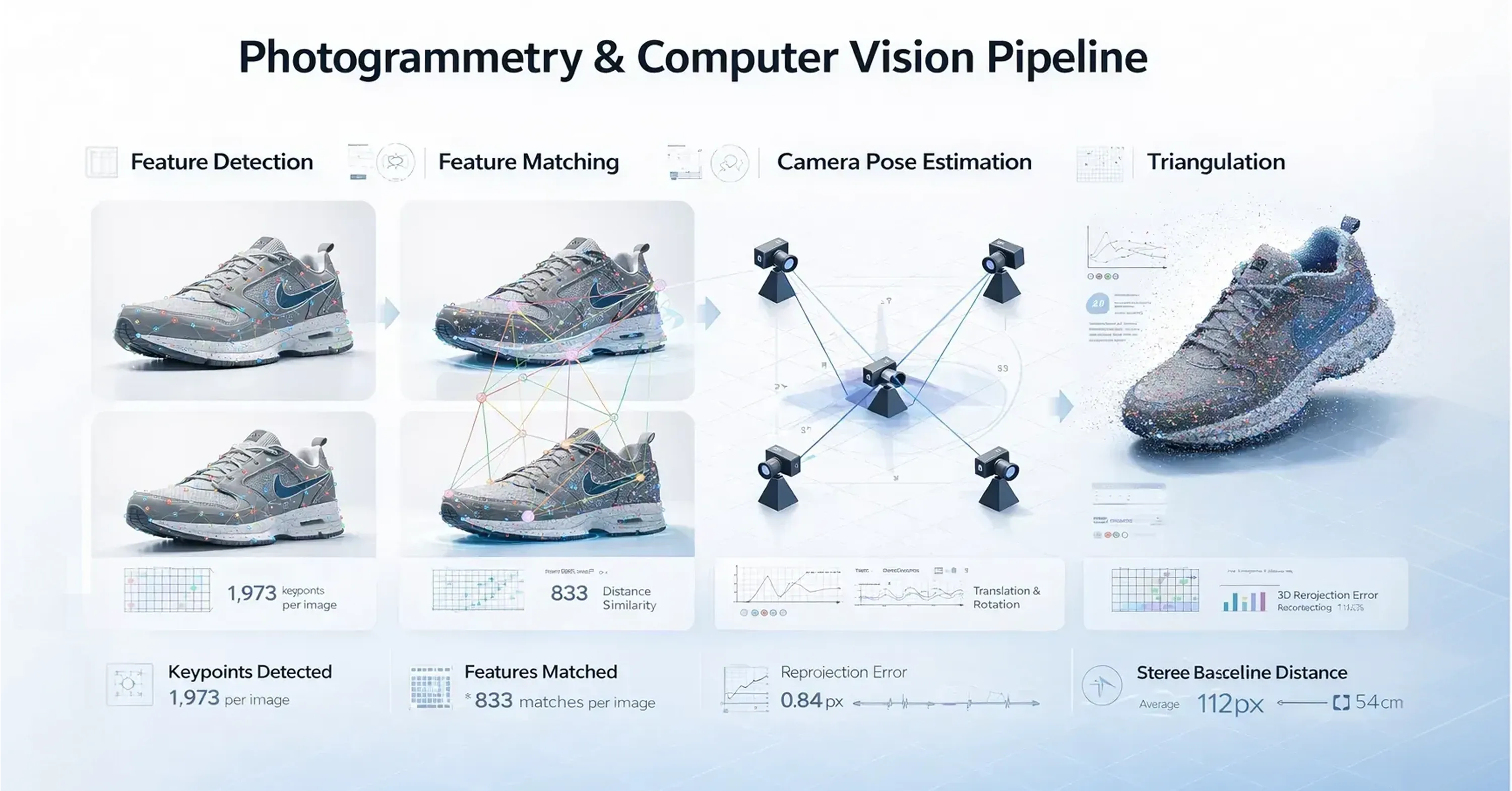

The 3d-from-photo generator operates on principles of computer vision rather than magic. The software doesn't "know" what a shoe or a bag looks like. Instead, it analyzes pixel patterns to understand the spatial relationship between photographs. The engine processes these images through a specific sequence of operations:

-

Feature Detection: The system scans every image to find distinctive points, such as corners, edges, or unique texture variations.

-

Feature Matching: Algorithms track these specific points across multiple overlapping images to establish connections.

-

Geometry Generation: The software calculates the camera positions and the 3D coordinates of the matched points.

This process relies heavily on overlap. If a specific scratch on a leather boot appears in photograph A, B, and C, the software uses feature matching to confirm that these pixels represent the same physical point in space. It detects and matches distinct points across multiple images to build a sparse map of the object. According to technical analysis, photogrammetry uses Structure-from-Motion and Multi-View Stereo to convert these 2D images into 3D geometry. The quality of the final model depends on the density of these matched features.

Pixels to Point Clouds

Once the system identifies the camera positions and matched features, it must calculate depth. The software uses triangulation to determine exactly where each point sits in 3D space. Triangulation computes 3D point coordinates when the system projects lines from the camera center through the matched feature on the image plane. The point where these lines intersect in digital space becomes a vertex.

This process happens millions of times. The generator calculates these intersections for every matched pixel group and creates a Dense Point Cloud. This cloud isn't a solid surface, but a collection of millions of measurements that describe the shape of the object. For retailers implementing a 2D-to-3D generator for footwear, this point cloud provides the geometric foundation that ensures the digital shoe matches the physical sample down to the millimeter. However, geometry alone doesn't create a photorealistic asset; the surface detail is equally critical.

Texture Maps vs. Painted Textures

A bottleneck in traditional CAD comes from the texturing phase. After an artist builds the shape of a model, they must wrap it in digital materials. To make a synthetic leather bag look real, the artist must paint imperfections. They add scuffs, discoloration, and wrinkles with digital brushes. This process remains an interpretation of reality. The artist decides where a scratch should go, which means the digital asset reflects the artist's skill rather than the product's actual condition.

Photogrammetry removes this subjectivity and captures the surface with absolute fidelity. The photogrammetry 3D process projects the original photographs back onto the geometric mesh. This captures the light, shadows, weave, and physical defects of the manufactured item. Research shows that tools like RealityCapture process 1,550 high-resolution photographs into a complex 3D mesh with automatic textures.

This method solves the material accuracy issue that plagues e-commerce returns. The customer sees the real material response, not a simulation. Modern viewers use physically-based rendering to simulate material properties accurately, but the renderer needs accurate base maps to work correctly. Brands ensure that the digital twin behaves exactly like the physical product under different lighting conditions because they derive these maps from optical data. High-fidelity textures require a high-fidelity mesh, but this creates a new problem.

Topology Challenge

Raw photogrammetry data consists of millions of unorganized triangles, and this creates a file too heavy for mobile browsers to render smoothly. This density presents a technical challenge known as bad topology, where the geometric mesh is too complex for real-time applications. To fix this, the 3d-from-photo generator employs a pipeline that converts raw scan data into optimized, quad-based meshes. This process, often called retopology, redraws the geometry of the object to use fewer polygons while it maintains the original shape.

Optimizing models is critical for performance on consumer devices. Retailers who use automatic 3D asset creation tools rely on this automated reduction to ensure customers can view products on their phones without lag. While a desktop computer might handle high-density models, mobile platform 3D assets require 5,000 to 10,000 polygons compared to the 50,000 to 100,000 polygons used for desktop rendering.

The software achieves this balance through polygon reduction and texture atlasing. Texture atlasing combines multiple texture images into a single file. This optimizes 3D models for real-time web and mobile performance because it reduces the number of draw calls the graphics processor must handle. This optimization ensures that the high-fidelity scan loads instantly on a customer's device.

Compression Standards

Efficient delivery requires advanced compression standards that reduce file weight without degrading visual quality. After the software optimizes the topology, it must compress the data for transmission over the internet. The industry standard for this task is the glTF format combined with Draco compression. Draco is a library for compressing and decompressing 3D geometric meshes and point clouds.

The impact of this technology on file size is substantial. Cesium reports that Draco mesh compression reduces glTF file size by up to 80-90% without visual quality loss. This reduction allows e-commerce platforms to serve high-definition 3D assets over standard 4G networks. Consequently, the browser downloads the asset quickly, and the consumer views the product immediately. Fast loading times represent only one aspect of efficiency. Speed becomes capital.

Scalability and Time-to-Market

Speed becomes capital in the fast-paced e-commerce environment, where trends shift rapidly and catalogs expand seasonally. Traditional manual CAD modeling suffers from linear time costs. If a skilled artist requires 20 hours to model one complex sneaker, then modeling 1,000 sneakers requires 20,000 hours. Hiring more artists can increase output, but it also increases management overhead and costs. In contrast, the photogrammetry 3D approach relies on computational scalability. The software processes data in parallel, which means it can digitize hundreds of items simultaneously if the server infrastructure supports it.

Automated solutions dramatically outpace manual workflows. Technical comparisons show that RealityCapture delivers 10-50x faster processing speed than competing photogrammetry solutions, and it's exponentially faster than manual creation. Brands that need affordable 3D product digitization for retailers choose automation to defend their margins.

While manual photogrammetry processing times typically run 24 hours standard and up to 72 hours for high-volume projects, fully automated pipelines can reduce this to minutes per asset. This high throughput allows brands to digitize entire seasonal collections in days rather than months. This ensures that the digital shelf is ready as soon as the physical product arrives in the warehouse. Rapid production means nothing if the resulting images fail to convert customers.

Trust Through Digital Fidelity

The gap between digital representation and physical reality erodes consumer trust and damages profit margins. Shoppers frequently return items because the product they receive looks different from the image they saw online. In fact, research indicates that products appearing different in person than online cause 80% of customer returns. Traditional CAD models contribute to this problem because they are idealized interpretations. An artist might unconsciously smooth out a rugged fabric or perfect a seam, which creates a false perception of the product’s quality.

A 3d-from-photo generator solves this by creating a digital twin that mirrors the physical item exactly. The scanner doesn't interpret. It captures. If the leather has a grain variation or the stitching is slightly uneven, the digital model shows it. This authenticity helps customers make informed purchasing decisions.

When shoppers feel confident that they understand the product, they're more likely to buy and less likely to return. Data supports the value of this high-fidelity experience and shows that 75% of online shoppers are willing to pay a premium for products they can experience via AR technology. By presenting an honest digital twin, brands build a transparent relationship with their customers.

Conclusion

We trade the subjective interpretation of an artist for the objective accuracy of optical data when we switch to automated photogrammetry. This technological shift does more than just produce pretty pictures. It fundamentally changes the economics of e-commerce. By generating a digital twin that reflects the true physical properties of a product, brands can significantly reduce return rates and accelerate their asset creation pipelines.

The 3d-from-photo generator ensures that the digital representation matches the physical reality, imperfections and all. Looking ahead, as spatial computing and agentic commerce rise, the demand for these high-fidelity digital twins will far outpace the supply of human 3D modelers. This technology ensures brands are ready for that future today.