Introduction

Online stores struggle with high return rates because shoppers cannot accurately judge product proportions from flat images alone. Fashion e-commerce experiences 20% to 30% return rates, and this directly damages profitability. Accessories present a specific challenge since buyers need to see how a strap drops across their shoulder or whether a tote overwhelms their frame. While digital try-on tools have proven effective for footwear, brands often delay handbag try-on implementation because they assume the technology requires massive enterprise budgets.

Today, Gen AI conversion tools have eliminated these previous financial barriers. We provide a practical integration roadmap. We examine the exact visualization anxieties that cause returns, break down the real costs of 3D asset creation, and explain the deployment of these tools across mobile platforms. Proper integration creates certainty for shoppers and protects margins for retailers. Let us review the technical steps that build this experience.

Why Accessories Demand Specific Visualization Solutions

Standard apparel fitting tools measure garments against body dimensions, but handbags do not drape over a torso or wrap around a waist. Handbags hang, rest, tuck under an arm, or cross a chest. Each position changes how the bag interacts with a specific body frame. This spatial relationship drives the purchase decision more than color or brand.

Proportion presents the first problem. A crossbody bag looks sleek on a tall model but can overwhelm a petite frame, and standard product photos show only one body type. Strap drop presents the second challenge. The distance from the shoulder to the bag's resting place determines comfort and appearance. This distance varies across body types, and a size chart cannot capture these variations. Capacity perception presents the third gap. Shoppers want to know whether a wallet, phone, and keys fit inside. Flat-lay images distort this interior space.

These concerns matter significantly. Shopify reports that handbag revenue reaches $2.73 per visit. This metric represents the highest amount among fashion e-commerce categories, and it means each lost sale carries an outsized cost. A handbag try-on experience addresses spatial relationships on mobile devices and replaces guesswork with certainty. Shoppers see a bag against their own proportions and trust the product before checkout. This trust translates directly into fewer returns.

Market Opportunity For Handbag Try-On

Luxury houses adopted virtual handbag fitting early, but mid-range and independent tiers have barely entered the space. This gap represents a significant missed opportunity. Shoppers buy mid-priced accessories and face the same visualization uncertainties. These shoppers generate higher return rates than luxury buyers. Luxury buyers often purchase items in physical stores.

Broader market signals confirm this trend. Retail Dive reports that the virtual try-on market will reach $48.8 billion by 2030. Much of this growth will come from categories beyond apparel and footwear. Spring 2026 fashion forecasts prioritize accessory-led styling. This shift places handbags at the center of purchase intent rather than on the periphery.

Mobile shopping now accounts for roughly 60% of global e-commerce transactions. Shoppers scroll on phone screens and struggle to judge scale and proportion from product photography. Virtual handbag fitting closes this perception gap and shows the product in a spatial context.

We observe that delayed implementation causes companies to miss an important technology shift. Competitors offer the assurance buyers need and capture more market share. Accurate try-on experiences convert hesitation into completed orders. Retailers move first in the mid-range tier and reach customers that luxury-only solutions currently ignore.

Identification Of Specific Fit Anxieties with Handbag Try-On

Technology platform selection requires companies to understand the exact questions their product pages leave unanswered. An AR bag preview solves nothing if it ignores the specific anxieties that cause shoppers to abandon carts or return purchases.

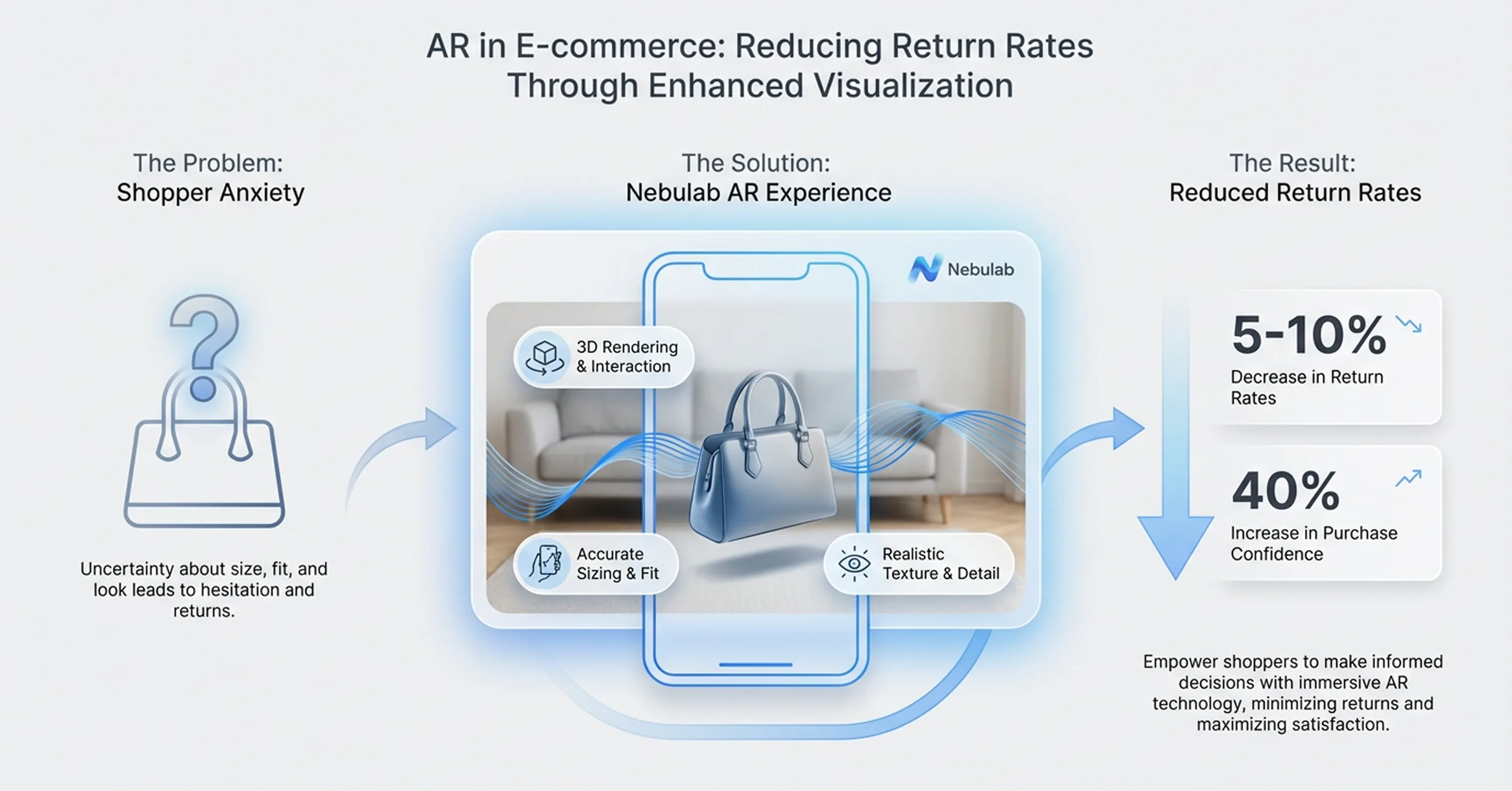

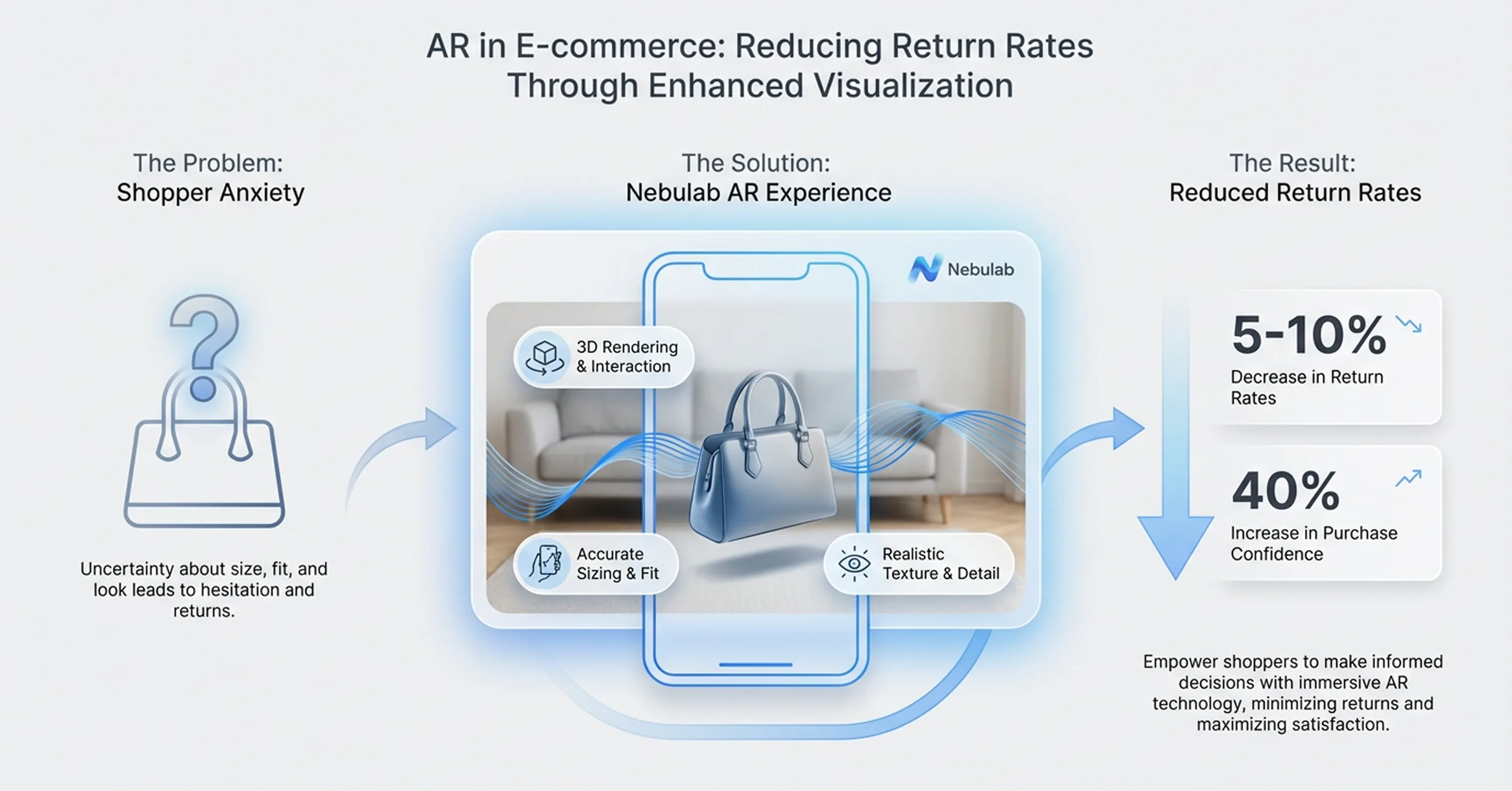

Research from Nebulab shows that products with AR visualization experience 5% to 10% lower returns. These reductions jump to 40% in specific categories. These higher reductions occur when the visualization directly answers the shopper's unresolved concern. The precision of the solution matters more than its novelty.

We can map each anxiety to a software capability and help companies evaluate their non-negotiable features:

-

Scale and proportion: Shoppers want to see the bag against their own body instead of a generic silhouette. The try-on software overlays the product at accurate dimensions relative to the specific body frame. This process requires spatial tracking rather than a simple image overlay.

-

Strap length and drop: A shoulder bag's entire aesthetic changes based on its resting place. The software simulates strap physics. Buyers can then judge whether the bag sits at the hip, waist, or ribcage on their specific build.

-

Capacity and dimensions: Flat-lay photography compresses depth perception. Effective AR bag preview tools render the bag in three dimensions. Shoppers rotate the item and gauge interior volume against familiar reference points.

-

Material appearance and hardware finish: Leather grain, metallic clasps, and woven textures behave differently under various lighting conditions. The reliability of material rendering determines whether the digital preview matches the physical product upon arrival.

Economics With Real Integration Costs

Cost has historically served as the primary barrier to visualization adoption outside the luxury segment. Traditional 3D scanning required physical hardware, specialized operators, and hours of post-production per product. A single handbag cost upward of $500 to digitize due to labor and equipment expenses.

Gen AI 2D-to-3D converters have compressed this cost structure. A basic 3D product render costs $50 to $250 per image with a one-to-three business day turnaround. Companies with catalogs of 50 to 200 handbag SKUs face a total asset creation budget that mid-size retailers easily absorb within a single quarter.

Integration costs include software licensing, developer hours for platform setup, and ongoing maintenance. Most Shopify and WooCommerce plugins charge monthly subscription fees between $50 and $500 based on feature depth and traffic volume. Developer time for the initial setup typically runs 20 to 60 hours. This timeframe varies based on the customization level of the product page experience.

We can see the soundness of this investment when we measure it against return logistics. A company ships 1,000 handbags per month at a 25% return rate, and each return costs $15 to process. This scenario creates $3,750 in monthly return handling expenses. A 15% reduction in returns saves over $6,700 annually. This figure excludes the conversion lift from increased buyer confidence. Companies model their own numbers against these benchmarks and identify their break-even point before they commit to a vendor and start the step-by-step implementation roadmap.

Step-by-Step Implementation Roadmap for Handbag Try-On

The most effective handbag try-on deployments follow a phased approach instead of a full-catalog launch on day one. Phased rollouts reduce risk, surface technical issues early, and generate performance data. This data informs each subsequent phase.

We have structured this roadmap into three stages that cover planning, asset production, and deployment. Each stage builds on the previous one. The entire process typically spans six to twelve weeks based on catalog size and platform complexity.

Prioritization serves as the first principle. Not every handbag in a catalog needs a 3D model immediately. Products with the highest return rates, highest traffic, or strongest sales volume enter the pipeline first. These SKUs generate the most data and deliver the fastest return on investment. A Shopify-based store can often launch with 20 priority SKUs and expand later based on measured results.

Precision in the planning phase prevents expensive corrections later. Companies rush past catalog auditing and platform evaluation and often discover compatibility issues or asset quality problems mid-deployment. These discoveries cost more time and money than the planning phase itself. Methodical preparation brings certainty and separates successful rollouts from abandoned pilots. This preparation starts when companies plan their strategy and select their platform.

Plan Strategy Plus Platform Selection

The planning phase starts with a catalog audit. We evaluate return rate data, page traffic, and conversion rates for every handbag SKU and rank them. Products that combine high traffic with high return rates serve as the strongest candidates for digital visualization. These products offer the clearest measurement signal after launch.

Platform selection depends on the required visualization type. Overlay methods layer a 2D image of the bag onto a photo or live camera feed. These solutions deploy faster and cost less, but they sacrifice depth perception and strap movement. Full spatial tracking positions a 3D model in the user's real environment. This method provides accuracy in proportion and scale but introduces longer load times and more complex integration.

The right choice depends on catalog complexity. Companies sell structured bags with minimal strap variation and find overlay methods sufficient. Other companies offer crossbodies, shoulder bags, and adjustable-strap styles. These sellers benefit more from spatial tracking because strap drop and body interaction serve as primary purchase factors. After companies select the platform, they must manage asset creation and conversion.

Asset Creation Plus Conversion

Gen AI tools have changed the cost equation for 3D model generation. The process starts with high-resolution source images. Photographers capture six to twelve angles per product against a neutral background with consistent lighting.

Quality requirements matter. Stitching details, clasp finishes, and leather texture must remain visible in the source photographs because the conversion algorithm extracts surface data from these images. Blurry or poorly lit source material causes the 3D output to misrepresent the product. This misrepresentation drives the same returns the technology originally aimed to prevent.

Innovecs Games reports that handbag 3D model costs start at $300 and reach $1,200 at 2026 pricing benchmarks. These prices fluctuate based on detail complexity and turnaround speed. Validation serves as the final step. Reviewers compare each model against the physical product to check texture reliability, hardware color accuracy, and dimensional proportion before the model enters the live environment. The approved models then move into the platform integration phase.

Mobile Shopper Optimization for Handbag Try-On

Smartphone users generate the majority of e-commerce traffic, and the AR bag preview experience must match their constraints first. BrandXR reports that 90% of American shoppers already use AR or are open to using it for shopping, and this readiness means the technology must meet them where they already browse.

Camera permission flows need careful design. A prompt that fires the instant a page loads feels invasive and drives users away. A better approach triggers the permission request only after the shopper taps a clearly labeled try-on button because that tap signals intent. Loading speed presents a second constraint. When developers compress 3D assets for desktop bandwidth, these files choke mobile connections. Models should render under three seconds on a standard 4G connection.

When a device lacks support for the full experience, a graceful fallback preserves usability and prevents a blank screen or an error message from appearing. This fallback usually involves a static overlay that shows the bag at an approximate scale.

Web-based AR runs directly in a mobile browser and requires no application download, and this direct access removes friction. Native application integrations offer better performance and higher conversion rates, but they demand that shoppers install an application first. We see most brands launch with browser-based AR to build confidence in the feature's value before they invest in a dedicated application experience. However, even the best mobile experience still faces material render limitations.

Material Render Limitations

Not all handbag materials translate into digital models with equal precision. Smooth and full-grain leather renders reliably because its surface reflects light in predictable and uniform patterns. The AR bag preview algorithm can map these reflections accurately across different device screens and ambient lighting conditions.

Woven textures, metallic hardware, and patent finishes introduce complexity. Woven materials such as rattan or tweed contain micro-shadows between individual fibers, and most render engines simplify or flatten these shadows. Metallic clasps and chain straps reflect environmental light in ways that shift based on the shopper's surroundings, and this shift means a gold clasp can look bronze on screen when a shopper views it in a dimly lit room. Patent leather amplifies this inconsistency because its mirror-like surface picks up color from nearby objects.

These limitations do not disqualify the technology, but they demand honest communication. When a brand publishes a digital preview that overpromises on material appearance, the physical product disappoints at delivery. This disappointment drives the same returns the tool was built to prevent.

We suggest brands calibrate render settings per material type and disclose that screen appearance may vary under different lighting conditions to protect shopper assurance. Brands that acknowledge what the technology does well and where it approximates earn more credibility than those that pretend every render is flawless. This credibility ultimately drives the financial performance that brands track during post-launch ROI measurement.

Post-Launch ROI Measurement

Engagement metrics such as page views and time-on-page tell us whether shoppers interact with virtual handbag fitting, but they do not reveal whether that interaction produces revenue. The reliability of post-launch measurement depends on tracking metrics that connect directly to financial outcomes.

The Interline reports that virtual try-on users added items to cart 52% more often and converted 35% more frequently than non-users in marketplace tests. These benchmarks offer a useful reference, but every brand's results vary by price point, product category, and implementation quality. We compare try-on-enabled pages against standard pages within the same store instead of industry averages alone to set realistic targets.

A try-on API integration enables granular tracking when it connects to analytics platforms. The metrics that provide certainty about ROI include:

-

SKU return rate: We compare return percentages on try-on-enabled products versus the same products before deployment.

-

Conversion rate lift: We measure the percentage of sessions that include a try-on interaction and end in a completed purchase.

-

Cart addition rate: We track how often shoppers add a product to cart specifically after they use the fitting feature.

-

Revenue per visitor: We isolate the revenue contribution of pages with virtual handbag fitting active.

Consumer Privacy Trust with Handbag Try-On

Handbag try-on features require camera access, and that requirement triggers legitimate privacy concerns. Shoppers do not just open their camera. They grant brands visual access to their physical environment and their body. The way a brand handles this moment determines whether the feature builds trust or erodes it.

BrandXR found that 75% of consumers who use AR or VR in shopping reported higher satisfaction with the experience. That satisfaction depends on confidence that the data stays protected. Compliance with the General Data Protection Regulation in Europe and the California Consumer Privacy Act in the United States is mandatory. Brands that operate in Illinois also face the Biometric Information Privacy Act, and this law imposes strict requirements on the collection of body-related data.

Transparent communication serves as the most effective adoption tool. A brief notice in plain language that appears before the camera activates should explain what data the feature collects, whether the system stores any images, and how long the system retains any stored data. Most well-designed handbag try-on tools process the camera feed locally on the device and do not transmit images to a server.

This direct statement removes the shopper's primary fear. Brands that treat privacy disclosures as a trust-building opportunity rather than a legal checkbox see higher feature adoption because shoppers feel respected. This established trust allows brands to connect the try-on feature to their omnichannel strategy.

Omnichannel Strategy Connection

This digital fitting delivers the most value when it connects to the broader shopping journey instead of existing as an isolated widget on a product page. Rawshot reports that social commerce sales of accessories will triple by 2025, and this growth means the try-on experience needs to travel wherever shoppers discover products, such as Instagram feeds, email campaigns, or in-store kiosks.

The accuracy of product visualization improves cross-category selling. The system can suggest coordinating items such as a wallet in the same leather or a scarf that complements the hardware tone when a shopper sees a crossbody bag against their frame. These styling recommendations feel natural because the shopper has already engaged with the brand's visual tools and established a context for their preferences.

Seasonal campaigns benefit from this integration as well. A Mother's Day gift guide that pairs the handbag try-on feature with outfit coordination previews gives shoppers a reason to explore beyond a single product page. This strategy leads to longer session durations and higher average order values, and these metrics compound the return on the initial investment.

Conclusion

In summary, fashion brands no longer need luxury-tier budgets to deploy handbag try-on technology. Gen AI conversion tools have compressed asset creation costs to levels that mid-range retailers absorb within a single quarter, and standard platform plugins handle the integration without custom development. The technology delivers measurable results when it addresses the specific anxieties that cause returns rather than applying a generic visual overlay.

Starting with the highest-return SKUs, tracking conversion and return rate together, and expanding the catalog systematically turns a pilot into a compounding revenue asset. WEARFITS provides the virtual try-on infrastructure and 3D asset pipeline that makes this process accessible for fashion brands at any scale. Try our platform to see how accurate handbag try-on translates into fewer returns and stronger purchase confidence on your product pages.