Introduction

A new rendering approach fundamentally restructures digital twin technology. Gaussian splatting twins compress what previously required weeks of photogrammetry processing into hours, and they deliver visual fidelity that mesh-based models structurally cannot match. Established organizations such as Bentley Systems and defense simulation providers have already moved past research evaluation into production deployment.

According to industry observations, 3D Gaussian Splatting transitioned from research paper to industry standard in three years. This timeline outpaces historical JPEG adoption. This rapid transition indicates that the technology solves real business problems. Currently, a Khronos Group standards process actively works to resolve the interoperability questions that historically blocked enterprise adoption.

We prepared this data-grounded briefing to explain where this shift is headed. We will examine the performance data, adoption trends, and operational frameworks to clarify what this means for current and future digital twin investment.

2025 inflection point for enterprise adoption

We observe a distinct shift in how organizations approach digital replication. Gaussian splatting twins have moved beyond academic research facilities and entered commercial production environments. Software vendors now provide enterprise-grade tools that support this new rendering approach to meet growing corporate demand.

For example, Bentley integrates Gaussian splats across cloud services, the iTwin Capture desktop client, and the Cesium ion platform. This broad integration demonstrates that the technology operates as a production-ready infrastructure rather than an experimental novelty. Major companies collaborate on standardization efforts to establish universal file formats and viewing protocols.

These standardization efforts give businesses the certainty they need to allocate budgets for new digital initiatives. Industry leaders commit resources to build compatible ecosystems and signal long-term viability to the broader market. We see this progress as a clear indicator that organizations can deploy the technology safely without risking stranded investments.

Early adopters no longer face the burden of building custom viewing software from scratch. Instead, they can rely on established commercial pipelines to process, store, and host their spatial data. The technology moved past the research phase and now solves complex documentation problems widely.

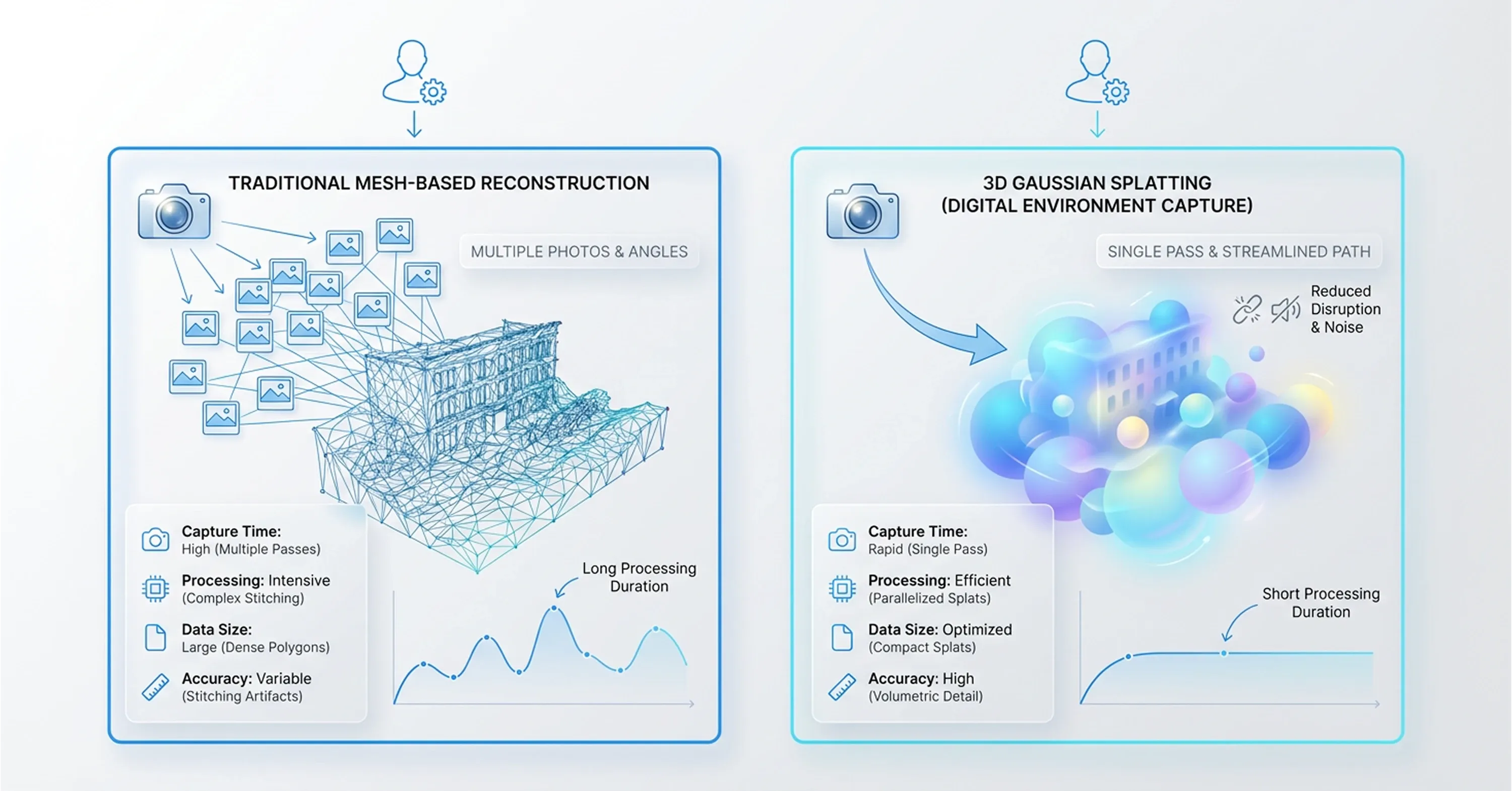

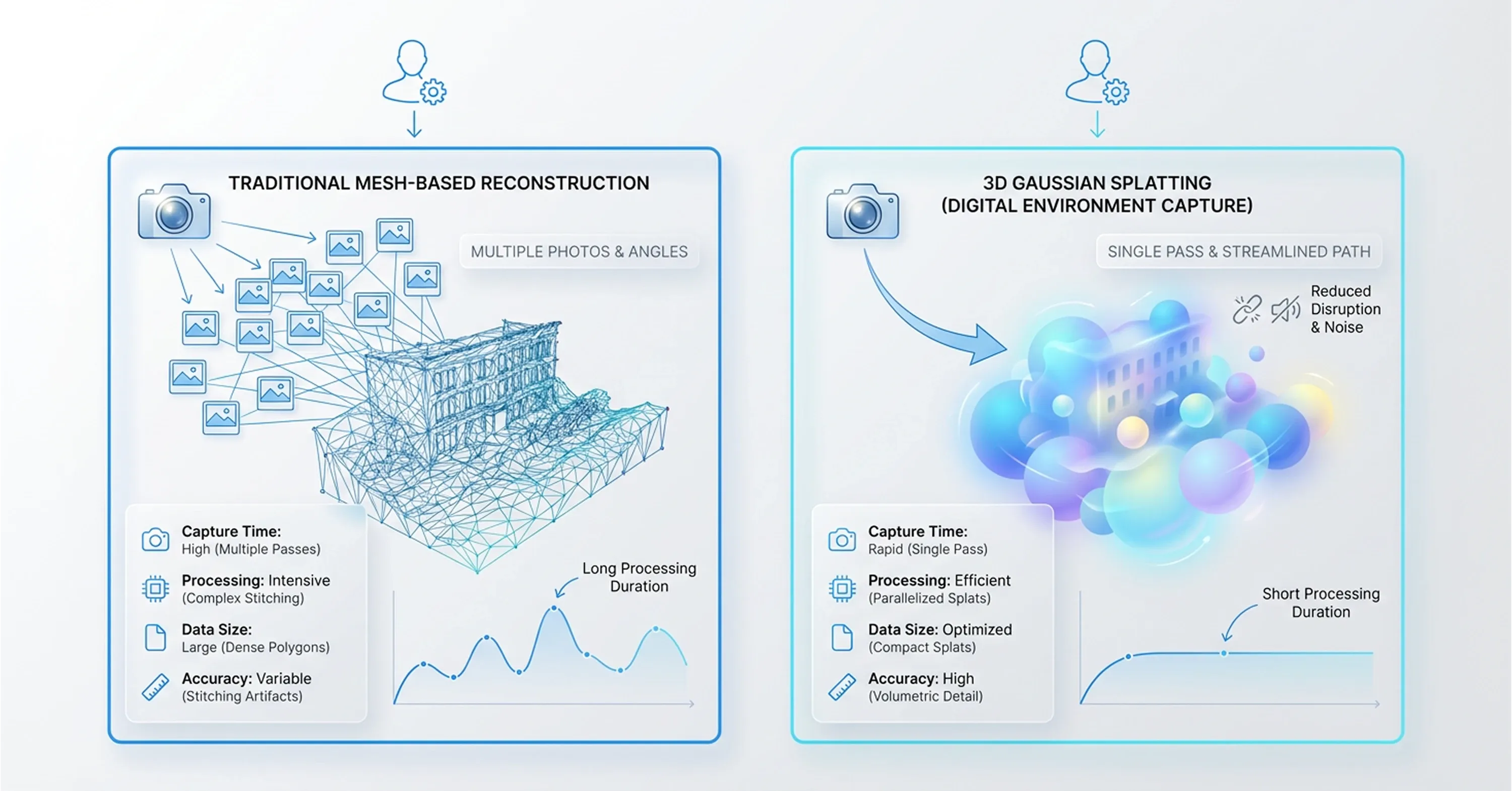

Reconstruction method differences from traditional meshes

Organizations must examine the structural differences between traditional methods and new rendering approaches to understand how this technology solves complex documentation problems widely. Traditional photogrammetry creates a polygon mesh to represent physical shapes. This mesh-based method struggles to capture reflective surfaces, transparent materials, and fine details like thin wires.

Conversely, 3D gaussian splatting replicas use millions of overlapping color blobs to represent volume and light characteristics. This mathematical approach eliminates the need to calculate strict surface geometries. As a result, the new method delivers visual clarity that mesh models structurally cannot match. The reconstruction speed also differs drastically between the two techniques.

According to recent mobile platform updates, Gaussian splatting enables faster 3D capture than traditional photogrammetry because it requires only one walkthrough and avoids hundreds of photos with long processing times. Operators capture spatial data swiftly and generate accurate models within minutes rather than days. This rapid processing provides flexibility for teams that need to document changing environments daily.

Furthermore, new digital reconstruction methods allow businesses to capture physical textures with high precision. We recognize that this technical shift changes how long field teams spend collecting visual data. The reduced capture time translates directly into lower labor costs and less disruption to regular facility operations.

Performance case and benchmark data analysis

Lower labor costs and reduced capture times stem directly from the underlying mathematical efficiency, and specific performance metrics provide the strongest argument for transitioning to the new rendering architecture. We track community benchmarks and proprietary comparisons to evaluate how these systems perform under heavy enterprise workloads.

The numbers show that organizations do not need expensive proprietary platforms to achieve high-fidelity results. Open-source libraries now match the output quality of closed commercial solutions, and this fact challenges existing budget assumptions. For example, AMD documentation confirms that GSplat-30k achieves PSNR 29.15 dB and a Structural Similarity Index (SSIM) of 0.87 during benchmark evaluations.

These high scores indicate excellent visual accuracy and fidelity compared to the original source images. Furthermore, optimization efforts have reduced the computational burden required to generate gaussian splatting digital twin models. The open-source gsplat library consumes less GPU memory and requires 15% less training time compared to the original implementation.

Such efficiency gains give smaller teams a distinct advantage when deploying spatial technology on limited hardware budgets. They can process large datasets locally without purchasing expensive cloud computing clusters. We know that maintaining strict visual standards requires reliability across all rendering pipelines.

When companies build real-time spatial visualization tools, they depend on these optimized libraries to deliver consistent frame rates. These performance benchmarks prove that the technology meets rigorous enterprise requirements while remaining cost-effective to deploy widely.

Industry adoption signals across sectors

Because the technology remains cost-effective and meets rigorous enterprise requirements, different industries integrate spatial computing technology to solve specific operational challenges and reduce physical site visits. We monitor these adoption patterns to understand how enterprises translate rendering speed into measurable business value. Companies no longer view spatial reconstruction as an experimental capability reserved for marketing demonstrations. Instead, they embed Gaussian splatting twins directly into their core operational workflows.

This transition happens across multiple sectors simultaneously because the underlying mathematical approach solves universal documentation problems. Teams require accurate spatial data to make informed decisions, regardless of whether they manage a pharmaceutical production line or oversee highway construction. We see organizations deploying 3D gaussian splatting replicas to document complex physical assets rapidly and securely.

These early deployments establish practical frameworks for other businesses to follow. The technology proves useful for groups that historically struggled with slow photogrammetry processing times or inaccurate polygon meshes. Manufacturing, defense, and infrastructure organizations use this technology to optimize their daily operations. Specific use cases highlight the practical benefits of modern spatial reconstruction techniques.

Manufacturing and quality control

The manufacturing sector provides one of the clearest use cases for modern spatial reconstruction techniques because factory floors demand rigorous safety standards and constant operational monitoring. Camera-based reconstruction and object detection allow managers to conduct remote inspections without stepping onto the production floor.

This method provides operational insight, and it helps maintain cleanroom protocols and avoid hazardous zones. We see facilities using these models for as-built verification to ensure machinery aligns with original engineering schematics. For example, engineers deployed the PerfCam framework in pharmaceutical production lines for real-time digital twin data extraction and continuous monitoring.

Such implementations boost overall facility efficiency because they identify bottlenecks instantly. Production managers can review a high-fidelity spatial recording of the assembly line to diagnose mechanical issues or verify safety compliance. This approach eliminates the need to halt production for manual site surveys. Organizations reduce costs daily because they minimize unexpected downtime and optimize their spatial layouts through remote observation.

Defense and simulation environments

Just as manufacturing facilities optimize layouts through remote observation, military organizations use spatial replicas to address persistent challenges in developing accurate virtual training environments within strict budget constraints. Traditional simulation development requires significant manual labor to recreate physical locations accurately. However, teams now generate spatial replicas to convert physical location scans into photorealistic virtual environments rapidly.

This rapid conversion addresses the longstanding cost and timeline problems that historically plagued simulation development. Recent collaborative research highlights these new capabilities. A joint project between the military and researchers demonstrates that Gaussian splatting models complicated environments with high detail.

Trainees can navigate through visually accurate replicas of specific urban landscapes or remote outposts before they deploy to those actual locations. We know that high-fidelity simulations directly improve troop readiness and situational awareness. Rapid spatial reconstruction allows defense departments to update their virtual scenarios continuously to reflect current ground conditions, and this ensures training exercises remain relevant and effective.

Infrastructure and asset management

The ability to update virtual scenarios continuously to reflect current ground conditions also benefits the public sector, where large-scale infrastructure documentation requires strong deployment pathways to handle large spatial datasets. Engineering firms map entire highway systems, bridge networks, and utility grids to monitor structural integrity.

We observe that multiple deployment pathways enable organizations to manage these large assets effectively. Teams use three distinct deployment models to ensure operational stability. First, desktop processing applications compile raw capture data securely on local workstations. Second, cloud processing services distribute heavy computational workloads across remote servers. Third, web streaming platforms deliver high-fidelity spatial models directly to client browsers.

These flexible deployment pathways allow teams to update their digital records continuously. Inspectors can stream highly detailed models of a bridge via a web browser to check for structural fatigue or rust and avoid dispatching a physical team. This continuous update potential changes static engineering records into dynamic management tools that save municipalities considerable time and money during regular maintenance cycles.

Importance of open standards for investment decisions

While dynamic management tools save municipalities considerable time and money, organizations still face the risk that proprietary file formats might trap enterprise data within a single vendor's software ecosystem. Companies rightly hesitate to invest large budgets into new technologies that might become obsolete or inaccessible if a vendor changes its pricing model.

However, the Khronos Group develops the KHR_Gaussian_splatting extension to establish a universal standard for these new spatial files. This standardization effort signals that the software industry recognizes and addresses vendor lock-in risks. We advise organizations to consider this specific standardization trajectory when they assess the long-term viability of their digital asset investments.

A universal format provides a solid foundation for cross-platform interoperability. Leading graphics executives confirm the significance of this transition. Patrick Cozzi serves as Chief Platform Officer at Bentley Systems, and he notes that glTF standardization represents a major step forward for the entire spatial computing ecosystem.

When multiple software providers agree on a common file architecture, they foster trust among corporate buyers. Organizations can generate gaussian splatting digital twin models with one capture tool and confidently view them in a different engineering application. This interoperability ensures that enterprise spatial data remains accessible and useful for decades.

Real barriers to enterprise implementation

Even though accurate spatial reconstruction improves digital workflows, Gaussian splatting digital twin models introduce specific friction points that organizations must understand before they commit resources. Software vendors have not yet published thorough troubleshooting guides, and these documentation gaps currently complicate the integration process.

Teams often struggle with rendering complexity when they combine the new models with existing polygon meshes in mixed spatial environments. However, a realistic assessment reveals that most of these hurdles are transitional rather than structural.

The underlying technology demonstrates mathematical soundness and processing stability, but a temporary expertise gap across the workforce causes the primary friction. Because the volumetric rendering approach is new, few spatial engineers possess the necessary skills to optimize these scenes. We observe that companies successfully navigate this gap when they invest in targeted training rather than wait for automated software updates.

Organizations must distinguish between temporary learning curves and fundamental technological flaws to build scalable enterprise adoption strategies. Software engineers constantly update the core processing libraries, and the rendering complexity naturally decreases as vendors release better integration tools. Once teams overcome these transitional skills gaps, they must determine how to fund and scale their spatial computing infrastructure.

Cost scalability frameworks

Enterprises must match the spatial architecture with the available budget when they fund and scale their spatial computing infrastructure. Organizations need to evaluate the realistic resource requirements to process Gaussian splatting twins at an enterprise scale. We see that companies typically choose between cloud processing and edge deployment.

Edge deployment allows teams to process data locally on dedicated hardware, and this keeps sensitive spatial records entirely within the corporate firewall. Cloud processing distributes the heavy computational load across external servers, but it incurs recurring monthly costs.

Companies must select the right hardware components to maximize scalability. A recent GPU selection study shows that the NVIDIA A10G is optimal for production workflows over more expensive A100 multi-GPU setups because it offers 24 gigabytes of Video Random Access Memory. This specific hardware efficiency allows companies to process massive spatial datasets and avoid excessive spending on top-tier computing instances.

Organizations adopt a three-tier deployment framework to establish successful cost-efficient modeling pipelines. They process highly sensitive data on local edge workstations, run large-scale site reconstructions on mid-tier cloud instances, and deliver the final volumetric models through web-based streaming platforms.

This tiered approach prevents budget overruns and ensures that field operators can access the structural data from any mobile device. These hardware trade-offs help enterprises compare the financial impact of the new volumetric technology against their existing spatial workflows.

Gaussian splatting twins versus traditional methods

After enterprises evaluate the financial impact of new volumetric technology against existing spatial workflows, a direct comparison between traditional documentation methods and modern rendering techniques reveals how the new approach delivers competitive business value.

We base our evaluation of spatial technologies on Total Cost of Ownership, reconstruction speed, and maintenance effort instead of pure technical computer graphics theory. Traditional photogrammetry requires extensive manual cleanup to fix broken geometries, and this constant intervention drives up labor costs significantly.

In contrast, Gaussian splatting twins capture light and volume directly from source images and eliminate weeks of manual mesh editing. Industry analysis confirms that this new volumetric method requires lower computational resources than photogrammetry and Neural Radiance Fields, and this makes it ideal for real-time applications and large physical environments.

We track four distinct business metrics to understand the practical operational impact.

-

Reconstruction time drops from days to hours because the mathematical model bypasses complex polygon geometry calculations.

-

Visual fidelity improves for reflective surfaces and transparent materials, and this reduces the need for costly manual rework by digital artists.

-

Maintenance effort decreases since field teams can update specific facility sections with a quick video walk-through instead of a massive laser scanning operation.

-

Simulation integration difficulty lessens because developers can import the raw volumetric data directly into modern game engines and avoid full environment reconstruction.

These improved business metrics provide a clear foundation for organizations to take decisive steps toward implementation and testing.

Strategic recommendations for organizations

Organizations use this clear foundation to take decisive steps toward implementation, and a structured strategy helps them test new spatial technologies and control financial risks. A massive deployment often creates isolated data silos and frustrates engineering teams. Instead, companies should frame a pilot program that generates measurable operational data before they commit to a full deployment.

We recommend the following sequence to start the evaluation process.

-

Select a single facility or specific operational workflow to serve as the bounded pilot program.

-

Use standard mobile hardware to capture the physical environment and test the baseline data collection speed.

-

Use mid-tier cloud computing instances to process the 3D gaussian splatting replicas and measure actual computational resource costs.

-

Compare the resulting visual fidelity and processing time against the existing mesh-based digital twin documentation.

-

Monitor the Khronos Group standardization progress and ensure the generated files remain compatible with future engineering software updates.

This measured approach ensures that enterprises build the necessary spatial computing expertise and validate the business case before they scale the technology across their entire operational portfolio.

Conclusion

As companies explore how new solutions integrate with digital twin workflows, we will summarize our major points about how open standards development and multi-sector production deployments push Gaussian splatting twins toward a critical point. We consider these developments important because they mirror the time when point-cloud technology became necessary for late adopters. We expect that the current barriers in documentation maturity and internal skills development will disappear in the future.

The window for early adoption is open now, and organizations that begin structured evaluation today will build the spatial computing expertise that late adopters will struggle to acquire quickly. WEARFITS helps enterprises move from evaluation to production by converting physical assets into accurate, deployment-ready volumetric replicas. Try our 3D digitization tools to see how your existing inventory translates into the rendering pipeline that modern digital twin workflows demand.