Introduction

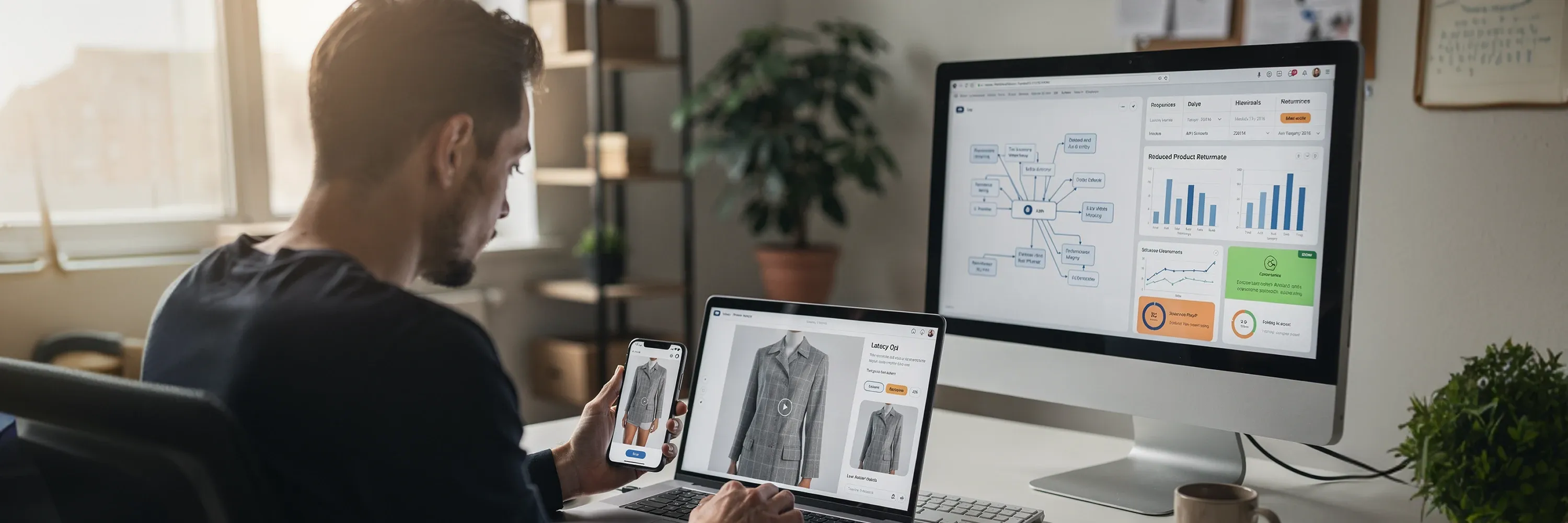

Online retail faces a profit challenge that erodes margins despite growing sales figures. We define this challenge as the "visualization gap" because customers cannot accurately gauge how a product will look or fit on their own bodies. This creates a disconnect between expectation and reality. This uncertainty drives a high return rate. Poor fit and sizing issues cause 70% of fashion retail returns annually.

Retailers need a strong infrastructure solution to bridge this gap. The try-on API serves as this digital bridge. It connects complex 3D assets and body data directly to the storefront and provides instant visual confirmation. We integrate this technology to move beyond static imagery. This offers a dynamic experience that aligns the digital product with the physical one. This shift helps solve the return rate problem and prepares our platforms for a sustainable future.

Visualization Gap and Return Rates

The financial stability of online retail depends heavily on bridging the visualization gap so a customer can perceive a product accurately before purchasing it. A deficit in confidence occurs when a shopper cannot physically interact with a garment. This visualization gap forces the customer to guess how a specific size or style will drape on their unique body type. Hesitation often leads to cart abandonment. A more expensive outcome happens when the customer buys the item, realizes the poor fit, and sends it back.

Recent data highlights the severity of this issue. According to the National Retail Federation, shoppers will return an estimated 19.3% of sales in 2025, and clothing leads the categories with approximately a 25% return rate. This churn creates a logistical nightmare and erodes profit margins. Retailers must process, inspect, and often discount these returned items, and this turns a potential profit into a net loss.

Rarely does the product quality cause this issue; instead, the inability to validate fit causes it. The WJAETS Academic Journal notes that 70% of online fashion shoppers cite the inability to try on items as their primary concern when shopping online. We need real-time virtual try-on precision that mimics the physical fitting room experience to close this gap. Providing accurate visual data replaces customer guesswork with certainty and reduces the friction that drives high return rates. This solution relies on a specific technical framework known as the try-on API architecture.

Try-On API Architecture

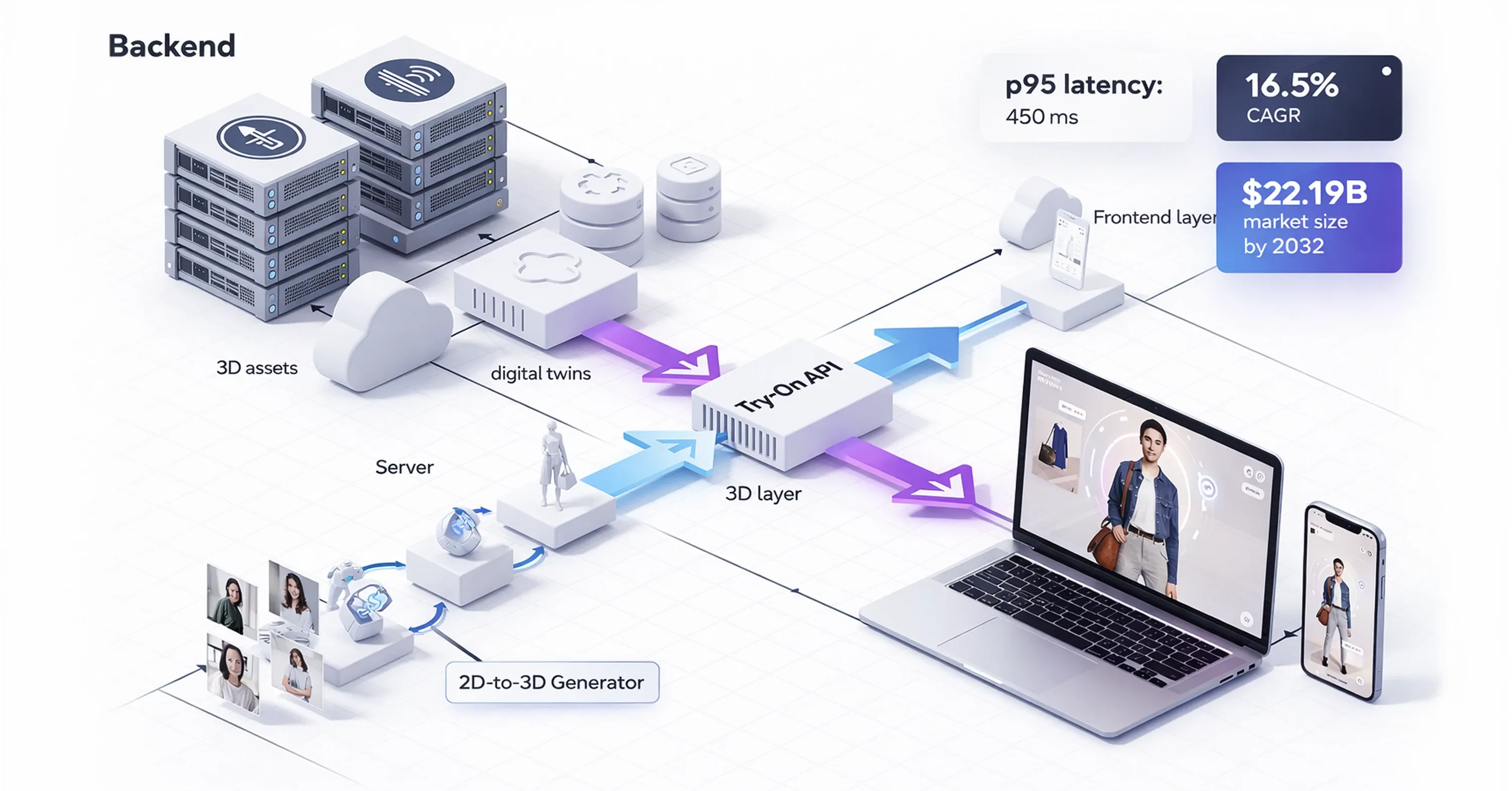

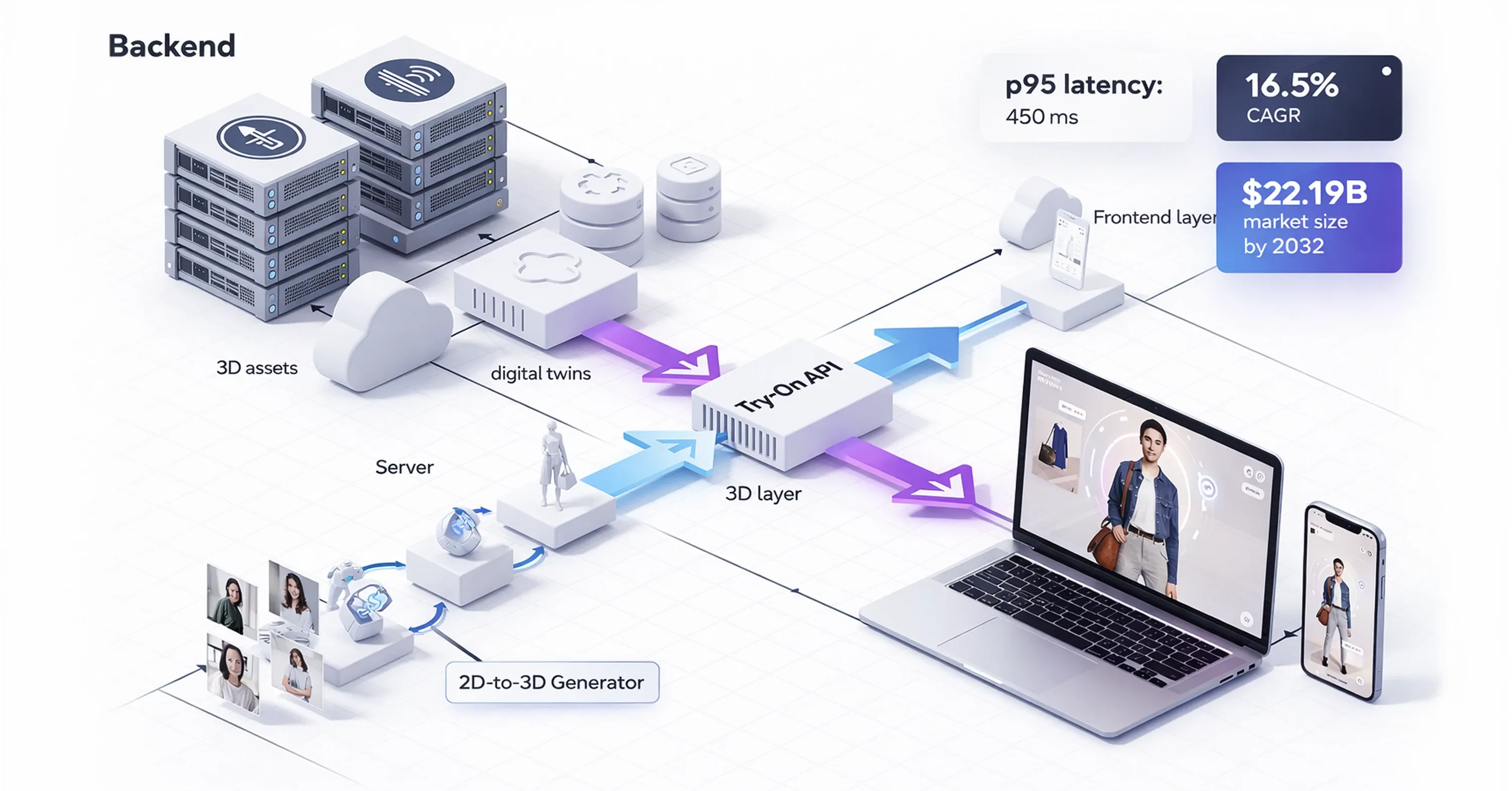

A try-on API functions as the critical connector between complex 3D assets stored on a server and the user interface where the shopping happens. It acts as the infrastructure that delivers visual data to the customer's screen. Modern e-commerce setups often shift toward headless commerce architecture. This approach decouples the frontend presentation layer from the backend logic. Retailers update their product catalogs, pricing, and 3D models independently and do not disrupt the visual experience on the website or mobile app.

Demand grows rapidly for high-quality visual assets to feed these systems. Analysts project the 3D mapping and modeling market to reach $22.19 billion by 2032, growing at a 16.5% CAGR from $6.77 billion in 2024. Retailers require a strong method to display these assets as they invest in them. Integration of a 2D to 3D generator for footwear and apparel allows platforms to create the necessary digital twins that the API then serves to the consumer.

Performance matters just as much as visual fidelity. If the system lags, the user disengages. Advanced virtual try-on systems achieve a p95 latency of 450 milliseconds for text-only queries on specialized hardware. This speed ensures that the experience feels smooth to the user.

We use either a server-side API or a client-side e-commerce SDK, but the goal remains the same: to render the digital product on the user's image instantly and maintain engagement and trust. Retailers who achieve this performance standard can then select one of the core integration methods.

Core Integration Methods

Virtual try-on technology implementation requires a strategic approach that aligns with a retailer's existing technical stack and business goals. No single method works for every platform. Some retailers have large engineering teams capable of building custom solutions, while others rely on pre-built platforms. Choosing the wrong integration path leads to technical debt and poor performance. CTOs identify integration challenges as significant barriers, and approximately 65% of fashion retailers report these as major obstacles to adoption.

We evaluate the three primary deployment methods to navigate these obstacles: SDKs, REST APIs, and plugins. Each offers a different balance of control, speed, and ease of implementation. Retailers who focus on scalable virtual try-on strategies often mix these methods depending on whether they optimize for a mobile app or a desktop web experience. The decision ultimately dictates the speed of the user experience and the flexibility of the backend management.

SDK Integration for Mobile-First Speed

Retailers who prioritize mobile applications find that a Software Development Kit (SDK) often provides the best performance. An e-commerce SDK installs directly into the mobile app and allows the device's own processor to handle the heavy lifting of rendering 3D images. This local processing eliminates the need to send video data back and forth to a server and significantly reduces latency.

The response is immediate when a customer moves their camera to see how a shoe looks from different angles. This efficiency matters for mobile users who rely on cellular data and may have unstable internet connections. We keep the processing on the device and ensure that the visual experience remains smooth and responsive, regardless of network conditions.

REST API Implementation for Flexibility

The virtual fitting API via REST protocols serves as the standard choice when we integrate virtual try-on into a custom website or a complex backend system. This method sends the user's input data to a remote server, processes the image using powerful cloud computing resources, and returns the result to the browser.

The try-on API approach is highly versatile because it does not rely on the customer's device hardware. It allows retailers to use high-fidelity models that might be too heavy for a smartphone to render locally. Additionally, REST protocols facilitate asynchronous data handling, so the system can process multiple requests or background tasks without freezing the user interface. This makes it an ideal solution for high-traffic web platforms that require strong, server-side management.

Plugin Deployment for Low-Code Platforms

Retailers running on established e-commerce platforms like Shopify, WooCommerce, or Magento find that plugins offer the fastest route to adoption. These solutions package the SDK or API functionality into a pre-configured module that requires little to no custom coding.

Plugins allow business owners to activate virtual try-on features immediately, often with a simple "install" click. While this method offers less customization than a raw API integration, it makes the technology accessible to brands that do not have extensive engineering resources. This approach democratizes the technology and ensures that smaller retailers can compete with industry giants and offer the same level of purchase confidence to their customers. These diverse integration strategies enable retailers to deploy effective real-world applications.

Real-World Applications

We see proof of virtual try-on technology's value when we examine its application across different retail sectors. While the underlying code remains complex, the user experience has become remarkably simple and effective. In the fashion sector, retailers now use hybrid models that combine advanced rendering with user-uploaded photos.

For instance, ASOS recently launched a system that allows shoppers to view clothes on models that look like them or on their own uploaded photos. Their implementation is notable for its speed. Reports indicate that ASOS virtual try-on experiences load in 4-7 seconds, and this speed beats previous industry standards.

The luxury sector also demonstrates how widespread adoption drives engagement. High-end brands use these tools to create experiences that mimic the boutique atmosphere. Gucci, for example, successfully deployed an AR campaign where their Snapchat AR shoe try-on lens reached over 18 million engagements and increased product page views by 188%.

This success is not limited to footwear. We see similar utility when we implement virtual try-on for bags and accessories, and this allows users to check scale and styling instantly.

Retailers who use an e-commerce SDK or try-on API typically focus on three key outcomes:

-

Reduced Friction: They minimize the steps between seeing a product and visualizing it on the body.

-

Cross-Platform Consistency: They ensure the experience works equally well on a mobile app and a desktop browser.

-

Instant Gratification: They prioritize rendering speed to keep the shopper engaged during the critical decision-making moments. However, retailers must balance this speed with accuracy and user expectations.

Accuracy and User Expectations

While speed drives engagement, the reliability of the output determines whether a customer keeps the product or returns it. We face a distinct challenge in balancing user expectations with technical limitations. A virtual fitting API must calculate complex variables, including fabric drape, lighting conditions, and body measurements, often from a single 2D photograph.

When the software gets this wrong, it erodes trust. For example, an audit of various try-on tools found that they mispredicted waist-to-hip ratio by ±3.7 inches for 68% of participants under 5'2". Such discrepancies occur because standard software often struggles with non-standard body types or poor-quality user photos.

To solve this, we are moving toward systems that rely on 3D depth data rather than simple image overlays. High-fidelity 3D avatars offer a much closer approximation of reality. Recent academic research indicates that 3D avatar-based virtual try-on solutions demonstrate improved accuracy and reduce size prediction errors to 0.7-1.2 cm compared to 2D systems. This level of precision is necessary for items where fit is non-negotiable, such as tailored suits or fitted dresses.

However, technical accuracy is only half the battle. We also have to manage user behavior. If a user uploads a grainy photo taken in a dark room, even the best virtual fitting API will struggle to render an accurate result. Therefore, successful integration guides the user to provide better input data and upgrades the backend processing to handle imperfect conditions. Solving these accuracy challenges allows retailers to meet new standards for sustainability and regulatory compliance.

Sustainability and Regulatory Compliance

The conversation around virtual try-on technology is shifting from profit to regulatory necessity. Governments globally are taking action to curb the environmental impact of the fashion industry, specifically regarding waste.

The European Union has set a strict precedent with the Ecodesign for Sustainable Products Regulation (ESPR). This regulation prohibits destruction of unsold apparel and footwear for large companies starting 19 July 2026, and medium-sized companies follow in 2030. This creates a legal requirement for retailers to minimize returns, as they can no longer simply discard returned stock that cannot be resold.

A try-on API acts as a primary compliance mechanism. These tools help customers make better choices, and we prevent the inventory from leaving the warehouse in the first place. Data suggests that AR usage could prevent 42% of online clothing returns. This reduction is critical for meeting new environmental standards.

We can view the implementation of these tools as a three-step compliance strategy:

-

Prevention: We use precise visualization to ensure the customer orders the correct item, and this drastically cuts the volume of goods that enter the reverse logistics chain.

-

Retention: We use the data gathered from virtual sessions to inform future inventory planning and ensure we produce what people actually want to wear.

-

Documentation: We use the analytics provided by the API to demonstrate to regulators that the company takes active, measurable steps to reduce its environmental impact. These compliance efforts confirm the technology's essential role in the industry.

Conclusion

To summarize, virtual try-on technology has transitioned from a novelty to an essential component of retail infrastructure. Retailers who adopt the try-on API protect their margins from return costs and prepare for the sustainability regulations that are quickly becoming law.

Data shows that accurate visualization creates a more profitable and responsible business model. WEARFITS provides the API and 3D asset infrastructure needed to close the visualization gap, turning standard product photos into precise, real-time fitting experiences that reduce returns before they happen.